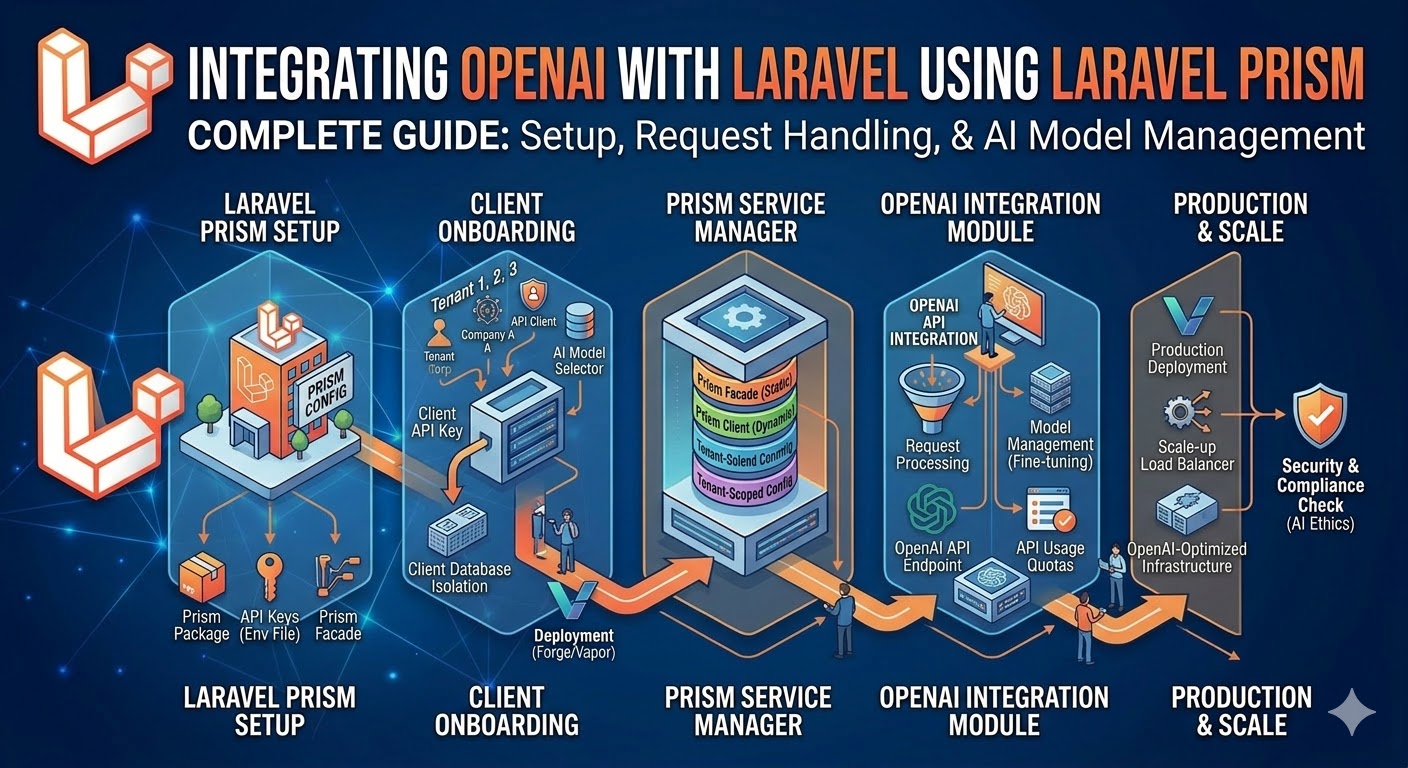

Integrating OpenAI with Laravel using Laravel Prism

A practical guide to building AI-powered features in Laravel using Laravel Prism — the clean way to work with multiple LLM providers without vendor lock-in.

Why Laravel Prism?

Every Laravel developer adding AI to their app faces the same dilemma: you pick OpenAI, write your integration, then six months later a client asks for Claude, or Gemini, or a self-hosted model. Ripping out and replacing the integration is painful — especially when AI calls are scattered across ten different service classes.

Laravel Prism solves this by providing a unified, fluent interface for every major LLM provider. You write your AI logic once against the Prism API. Switching from GPT-4o to Claude 3.5 Sonnet is a one-line config change. Your business logic stays untouched.

I have used Prism on four production Laravel applications, including a RAG-powered support chatbot that handles 2,000+ queries per day across two LLM providers simultaneously. The unified interface has saved significant refactoring time each time a client wanted to switch or test a new model.

Installation and Configuration

composer require echolabs/prismphp artisan vendor:publish --tag=prism-configAdd your provider credentials to .env:

OPENAI_API_KEY=sk-...

ANTHROPIC_API_KEY=sk-ant-...

GEMINI_API_KEY=...Configure your default provider in config/prism.php:

'default_provider' => 'openai',

'providers' => [

'openai' => [

'driver' => 'openai',

'api_key' => env('OPENAI_API_KEY'),

'default_model' => 'gpt-4o',

],

'anthropic' => [

'driver' => 'anthropic',

'api_key' => env('ANTHROPIC_API_KEY'),

'default_model' => 'claude-3-5-sonnet-20241022',

],

],Your First AI Feature: Text Generation

Generate a product description from a few keywords:

use EchoLabs\Prism\Facades\Prism;

use EchoLabs\Prism\Enums\Provider;

$response = Prism::text()

->using(Provider::OpenAI, 'gpt-4o')

->withSystemPrompt('You are a copywriter for a SaaS product. Write clear, benefit-focused descriptions.')

->withPrompt('Write a 2-sentence description for a project management tool that uses AI to auto-prioritize tasks.')

->generate();

echo $response->text;

// Output: "Smart Priority automatically ranks your to-do list using AI,

// so you always know exactly what to work on next. Stop spending 20 minutes

// deciding what matters — let the AI do it in seconds."The generate() call is synchronous. For production, wrap it in a queued job to avoid blocking your HTTP response.

Structured Outputs with Prism

Unstructured text is hard to work with in application code. Prism's structured output feature lets you define a schema and receive a typed PHP object back — no JSON parsing, no regex, no hallucinated keys.

use EchoLabs\Prism\Schema\ObjectSchema;

use EchoLabs\Prism\Schema\StringSchema;

use EchoLabs\Prism\Schema\NumberSchema;

use EchoLabs\Prism\Schema\BooleanSchema;

$schema = new ObjectSchema(

name: 'ticket_analysis',

description: 'Analysis of a support ticket',

properties: [

new StringSchema('sentiment', 'positive, neutral, or negative'),

new StringSchema('category', 'billing, technical, feature_request, or other'),

new NumberSchema('urgency', 'urgency score 1-10, where 10 is critical'),

new BooleanSchema('needs_escalation', 'true if the ticket should be escalated to a senior agent'),

new StringSchema('suggested_response', 'A one-paragraph suggested reply to the customer'),

],

requiredFields: ['sentiment', 'category', 'urgency', 'needs_escalation', 'suggested_response']

);

$response = Prism::structured()

->using(Provider::OpenAI, 'gpt-4o')

->withSchema($schema)

->withPrompt("Analyze this support ticket:\n\n" . $ticket->body)

->generate();

$analysis = $response->structured;

// $analysis is a typed PHP array matching your schema exactly

$ticket->update([

'sentiment' => $analysis['sentiment'],

'category' => $analysis['category'],

'urgency' => $analysis['urgency'],

'needs_escalation' => $analysis['needs_escalation'],

]);

if ($analysis['needs_escalation']) {

EscalateTicket::dispatch($ticket, $analysis['suggested_response']);

}This pattern — structured AI output flowing directly into your database and job queue — is how you build AI features that are reliable and testable, not just demo-able.

Building a Streaming Chat Endpoint

Users expect ChatGPT-style streaming responses where text appears word by word. Prism supports streaming via Server-Sent Events (SSE):

// routes/api.php

Route::post('/chat', function (Request $request) {

$validated = $request->validate([

'message' => ['required', 'string', 'max:2000'],

'history' => ['array'],

'history.*.role' => ['required', 'in:user,assistant'],

'history.*.content' => ['required', 'string'],

]);

return response()->stream(function () use ($validated) {

$messages = collect($validated['history'] ?? [])

->map(fn($m) => $m['role'] === 'user'

? new UserMessage($m['content'])

: new AssistantMessage($m['content'])

)->all();

$messages[] = new UserMessage($validated['message']);

$stream = Prism::text()

->using(Provider::Anthropic, 'claude-3-5-sonnet-20241022')

->withSystemPrompt('You are a helpful assistant.')

->withMessages($messages)

->stream();

foreach ($stream as $chunk) {

echo "data: " . json_encode(['text' => $chunk->text]) . "\n\n";

ob_flush();

flush();

}

echo "data: [DONE]\n\n";

}, 200, [

'Content-Type' => 'text/event-stream',

'Cache-Control' => 'no-cache',

'X-Accel-Buffering' => 'no',

]);

});Multi-Provider Fallback Strategy

OpenAI has outages. Claude has rate limits. In production, you need a fallback strategy so an LLM provider outage does not take down your AI features entirely.

// app/Services/AIService.php

class AIService

{

private array $providers = [

[Provider::OpenAI, 'gpt-4o'],

[Provider::Anthropic, 'claude-3-5-sonnet-20241022'],

[Provider::OpenAI, 'gpt-4o-mini'], // cheaper fallback

];

public function generate(string $prompt, string $systemPrompt = ''): string

{

$lastException = null;

foreach ($this->providers as [$provider, $model]) {

try {

$response = Prism::text()

->using($provider, $model)

->withSystemPrompt($systemPrompt)

->withPrompt($prompt)

->generate();

return $response->text;

} catch (\Exception $e) {

Log::warning("AI provider {$provider->value}/{$model} failed", [

'error' => $e->getMessage(),

]);

$lastException = $e;

}

}

throw $lastException;

}

}Token Usage and Cost Tracking

AI costs can spiral quickly if you are not tracking usage. Prism exposes token usage on every response:

$response = Prism::text()

->using(Provider::OpenAI, 'gpt-4o')

->withPrompt($prompt)

->generate();

$usage = $response->usage;

AIUsageLog::create([

'user_id' => auth()->id(),

'tenant_id' => app('tenant')->id,

'provider' => 'openai',

'model' => 'gpt-4o',

'prompt_tokens' => $usage->promptTokens,

'completion_tokens' => $usage->completionTokens,

'cost_usd' => $this->calculateCost('gpt-4o', $usage),

'feature' => 'ticket_analysis',

]);

With this log table, you can build a cost dashboard per tenant, per feature, and per model — and set spending alerts before a runaway prompt costs you thousands of dollars.

Testing AI Features Without Making Real API Calls

Tests that hit the real OpenAI API are slow, flaky, and cost money. Prism ships with a fake that lets you assert the correct prompts were sent and return controlled responses:

use EchoLabs\Prism\Testing\PrismFake;

public function test_ticket_is_analysed_on_creation(): void

{

Prism::fake([

new TextResult(text: json_encode([

'sentiment' => 'negative',

'category' => 'billing',

'urgency' => 8,

'needs_escalation' => true,

'suggested_response' => 'We apologize for the billing issue...',

])),

]);

$ticket = Ticket::factory()->create([

'body' => 'I was charged twice this month and nobody is responding!',

]);

$this->assertEquals('negative', $ticket->fresh()->sentiment);

$this->assertTrue($ticket->fresh()->needs_escalation);

Prism::assertCallCount(1);

Prism::assertPromptContains('I was charged twice this month');

}Your full test suite runs in seconds, catches regressions reliably, and costs nothing in API credits.

Frequently Asked Questions

Is Laravel Prism production-ready?

Yes. I have used it on multiple production applications handling thousands of AI requests per day. The package is actively maintained by Echo Labs and tracks upstream API changes promptly. For anything mission-critical, always implement the multi-provider fallback pattern described above so you are not dependent on a single provider's uptime.

How does Prism compare to using the OpenAI PHP SDK directly?

The OpenAI PHP SDK gives you complete control over every API parameter. Prism gives you a provider-agnostic interface, built-in structured outputs, streaming helpers, and a test fake — at the cost of some low-level flexibility. For most application-layer AI features, Prism is the better choice. For fine-tuned model management, batch processing, or embeddings at scale, you may want the underlying SDK for those specific use cases.

Does Prism support embeddings and vector search?

Prism supports embedding generation via its embeddings() API. For the vector storage and similarity search layer, you combine it with pgvector (PostgreSQL) or a dedicated vector database like Qdrant or Pinecone. See my companion article on building a RAG chatbot with Laravel and pgvector for a complete walkthrough of the embedding + storage + retrieval pipeline.

How do I handle Prism rate limit errors in production?

Wrap your Prism calls in a retry policy using Laravel's built-in retry() helper with exponential backoff, and catch RateLimitException specifically. For sustained high volume, consider a request queue with Redis that smooths out bursts and respects your provider's requests-per-minute limit.

Senior Full Stack Developer — Laravel, Vue.js, Nuxt.js & AI. Available for freelance projects.

Hire Me for Your Project